AI Hallucination: Understanding, Causes, Risks, and Prevention Strategies

Artificial intelligence has reached a point where it can write essays, generate code, and assist in critical decision-making. Yet one persistent limitation continues to challenge its reliability: AI hallucination—the generation of plausible but incorrect or fabricated information. This phenomenon is not a minor technical glitch; it is a systemic issue rooted in how modern AI systems are designed and trained.

This article provides a comprehensive, evidence-based exploration of AI hallucinations, including their causes, types, risks, detection methods, and prevention strategies. It also examines the broader implications for trust, user experience, and ethical deployment.

Key Takeaways

- Probabilistic Nature: Models predict likely word sequences rather than verifying objective truths.

- Authoritative Fabrications: AI presents errors with high confidence and fluent language.

- Knowledge Gaps: Hallucinations occur when training data is missing or contradictory.

- Contextual Failure: Vague prompts force models to guess and invent details.

- Risk Factors: Fabricated facts create legal, financial, and ethical liabilities.

- Grounding Solutions: RAG connects AI to external data to improve accuracy.

- Human Necessity: Human oversight remains the only way to guarantee factual integrity.

What Is AI Hallucination?

AI hallucination refers to outputs generated by models—particularly large language models (LLMs)—that are factually incorrect but presented with confidence and fluency.

Unlike traditional software bugs, hallucinations are not random errors. They emerge from the probabilistic nature of AI systems, which generate responses based on likelihood rather than verified truth. As a result, an AI may invent citations, fabricate statistics, or misinterpret instructions while appearing authoritative.

In practice, hallucinations often manifest as:

- Fabricated references or research papers

- Incorrect factual claims

- Misleading summaries of real data

- Confident answers to questions with no known solution

A defining characteristic is that the output is linguistically coherent but epistemically unreliable—making it particularly difficult to detect.

How AI Hallucinations Emerged

Early AI systems, such as rule-based expert systems, rarely hallucinated because they relied on predefined logic and structured databases. However, modern generative AI models—trained on vast datasets—operate differently.

The shift began with neural networks and deep learning, where models learned patterns rather than explicit rules. With the rise of transformer-based architectures, hallucinations became more visible due to the models’ ability to generate long-form text.

Researchers now recognize hallucination as an inherent byproduct of probabilistic modeling. Some studies suggest it may even be “an innate limitation” of large-scale language models.

At the same time, newer models—despite improved reasoning capabilities—may hallucinate in subtler ways, embedding inaccuracies within otherwise coherent explanations.

Causes of AI Hallucination

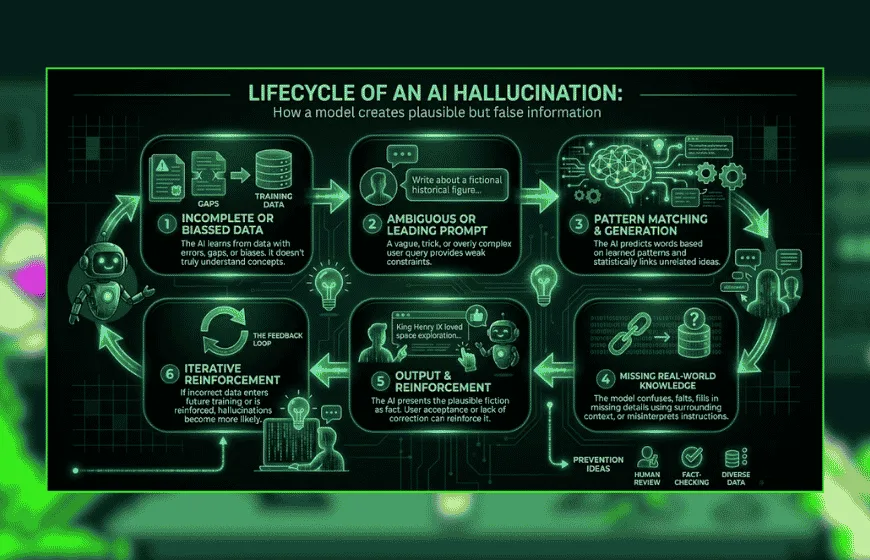

Understanding the causes of AI hallucination is essential for both mitigation and responsible deployment. Research consistently identifies three primary categories:

1. Data-Related Causes

AI models are only as reliable as their training data. Hallucinations often arise from:

- Incomplete or biased datasets

- Noisy or contradictory information

- Lack of domain-specific grounding

IBM notes that hallucinations can result from training data bias, inaccuracies, and overfitting.

When models encounter gaps in knowledge, they may “fill in” missing information with statistically plausible guesses.

2. Model Architecture and Training

The architecture of LLMs contributes directly to hallucination risk. These systems are optimized to predict the next word in a sequence, not to verify facts.

Critically, many models are trained to prioritize fluency over correctness. Research shows that hallucinations occur because models are incentivized to produce plausible responses rather than admit uncertainty.

This leads to confident but incorrect outputs, especially in ambiguous or complex queries.

3. Inference and Prompting Issues

Even well-trained models can hallucinate due to how they are used:

- Vague or underspecified prompts

- Conflicting instructions

- Lack of context

User behavior also plays a role. Studies indicate that unclear prompts can increase hallucination likelihood, highlighting a co-production dynamic between user and model.

Types of AI Hallucinations

AI hallucinations are not uniform; they can be categorized based on their origin and manifestation.

1. Factual Hallucinations

The model generates incorrect or fabricated facts (e.g., nonexistent studies or incorrect statistics).

2. Logical Hallucinations

The reasoning process is flawed, even if individual statements appear correct.

3. Contextual Hallucinations

The response deviates from the provided context or user intent.

4. Fabricated References

Common in academic or legal contexts, where models invent citations or sources.

A comprehensive survey highlights that hallucinations can arise at multiple stages—data collection, model training, and inference—forming a lifecycle of risk.

Examples of AI Hallucinations

AI hallucinations are not theoretical—they have caused real-world consequences across industries:

- Legal cases where AI-generated citations were entirely fabricated

- Customer service bots providing incorrect policy information

- AI-generated code referencing nonexistent software packages

In one documented case, a chatbot incorrectly promised a refund policy, forcing a company to honor an unintended commitment.

These examples illustrate that hallucinations can lead to financial loss, reputational damage, and legal exposure.

Risks of AI Hallucinations

1. Misinformation and Decision Errors

Hallucinations can mislead users into making incorrect decisions, particularly in high-stakes fields like healthcare and finance.

2. Erosion of Trust

Trust is a critical factor in AI adoption. When users encounter hallucinations, confidence in the system declines.

Research emphasizes that hallucinations directly impact user trust and long-term engagement with AI systems.

3. Operational and Business Risks

Organizations using AI tools face risks such as:

- Legal liability

- Compliance violations

- Reduced productivity

Hallucinations are increasingly viewed as a governance and risk management issue, not just a technical problem.

4. Ethical Implications

In sensitive domains, hallucinations raise ethical concerns:

- Providing incorrect medical advice

- Generating biased or misleading content

- Amplifying misinformation

These risks highlight the need for responsible AI design and deployment.

Detection of AI Hallucinations

Detecting hallucinations is challenging because outputs often appear credible. However, several approaches are emerging:

1. Uncertainty Estimation

Models can be trained to express confidence levels, helping identify uncertain outputs.

2. External Verification

Cross-referencing outputs with trusted databases or retrieval systems.

3. Consistency Checks

Comparing multiple responses to detect contradictions.

4. Human-in-the-Loop Validation

Human oversight remains one of the most reliable detection methods.

Research suggests combining multiple detection techniques into a closed-loop system for continuous monitoring and improvement.

Preventing and Controlling AI Hallucinations

While hallucinations cannot be completely eliminated, they can be significantly reduced through layered strategies.

1. Retrieval-Augmented Generation (RAG)

RAG integrates external knowledge sources into the generation process, grounding outputs in verifiable data.

This approach is widely recognized as one of the most effective mitigation techniques in both research and industry.

2. Prompt Engineering

Carefully designed prompts can reduce ambiguity and guide models toward accurate responses.

Examples include:

- Providing context explicitly

- Asking for sources or citations

- Requesting uncertainty acknowledgment

3. Model Fine-Tuning and Alignment

Fine-tuning models on high-quality, domain-specific data improves accuracy and reduces hallucination risk.

4. Multi-Model and Agent Systems

Advanced systems use multiple models to validate outputs. One model generates content, while others verify or refine it.

This “agentic” approach has shown promising results in reducing hallucinations and improving reliability.

5. Human Oversight

Despite advances in automation, human validation remains essential—especially in high-risk applications.

Experts emphasize that AI outputs should always be critically evaluated rather than blindly trusted.

Are There AI Models Without Hallucinations?

The short answer: no.

All current generative AI systems are susceptible to hallucinations due to their probabilistic nature. Some models reduce hallucination rates through improved training and architecture, but none eliminate the problem entirely.

Emerging approaches, such as hybrid systems combining neural networks with symbolic reasoning, aim to improve reliability. However, these systems often trade flexibility and creativity for accuracy.

User Experience and Trust Considerations

AI hallucinations have a direct impact on user experience:

- Users may over-trust confident outputs

- Subtle errors are harder to detect

- Repeated inaccuracies reduce engagement

As AI becomes more integrated into daily workflows, managing user expectations is critical. Systems should:

- Communicate uncertainty clearly

- Provide citations or evidence

- Encourage verification

Ultimately, trust is not built solely on accuracy—but on transparency and reliability.

Read Also:

Agentic AI vs Generative AI: What Sets Them Apart (And Why It Matters)

AI Agents for Content Creation: Why Marketing Teams Are Quietly Changing How They Work

Comparative Insight: High vs. Low Hallucination Models

Different AI architectures exhibit varying levels of hallucination risk:

- General-purpose LLMs: High flexibility, higher hallucination risk

- Domain-specific models: Lower hallucination risk due to specialized training

- Hybrid (neurosymbolic) systems: Improved accuracy, reduced flexibility

This trade-off highlights a fundamental tension in AI design: balancing creativity with correctness.

The Future of AI Hallucination Mitigation

Research is moving toward more robust solutions, including:

- Improved evaluation metrics that reward uncertainty

- Better alignment between training objectives and real-world use

- Integration of reasoning and verification systems

Some experts argue that hallucinations will never disappear entirely but can be managed through system-level design improvements and governance frameworks.

AI hallucination is not a temporary flaw—it is a fundamental challenge rooted in how modern AI systems operate. While significant progress has been made in understanding and mitigating hallucinations, they remain an inherent risk in generative AI.

The path forward lies in layered mitigation strategies, combining technical solutions like RAG and uncertainty estimation with human oversight and ethical design. Organizations and practitioners must treat AI outputs not as definitive answers, but as probabilistic suggestions requiring validation.

As AI continues to evolve, managing hallucinations will be critical to building trustworthy, reliable, and responsible systems.

Frequently Asked Questions

1. What exactly is an AI hallucination? An AI hallucination occurs when a large language model (LLM) generates an output that is linguistically fluent and confident but factually incorrect or completely fabricated. These are not random bugs but a result of the model predicting the most likely next word rather than verifying facts.

2. Why do AI models make up facts instead of admitting they don't know? Most AI models are trained to prioritize fluency and provide complete responses. Because they operate on probability, they often "fill in" gaps in their training data with plausible-sounding information rather than acknowledging a lack of knowledge.

3. What are the main causes of these errors? Hallucinations generally stem from three areas: incomplete or biased training data, model architectures that prioritize word prediction over truth-seeking, and vague user prompts that provide insufficient context.

4. How can I tell if an AI is hallucinating? Detection methods include cross-referencing AI claims with trusted external databases, using "uncertainty estimation" to see how confident the model is, and performing human-in-the-loop validation to verify citations and logic.

5. What is Retrieval-Augmented Generation (RAG)? RAG is a prevention strategy that connects an AI model to a specific, trusted external knowledge source. Instead of relying solely on its internal memory, the model retrieves relevant documents to "ground" its answer in real-world facts.

6. Can AI hallucinations be completely stopped? Currently, no. Because generative AI is probabilistic by nature, the risk of hallucination is an inherent limitation. However, using tools like RAG, expert prompt engineering, and human oversight can significantly reduce the frequency of errors.

7. What are the real-world risks of ignoring AI hallucinations? The risks include spreading misinformation, legal liability (such as using fake court citations), financial loss due to incorrect business advice, and a general erosion of user trust in AI systems.