Can a Computer Really Pass for a Human?

Human conversation feels simple on the surface, yet it hides surprising complexity. Many people wonder if a computer can ever talk the way we do.

Quick Insights

• The Turing Test in AI asks whether a computer can sound convincingly human in conversation.

• Early chatbots used scripts or personas to hide their limits, rather than show deep understanding.

• Modern systems use machine learning to improve fluency, yet they still struggle with context and intuition.

• The test does not measure consciousness, only conversational behavior.

• New challenges now explore reasoning, emotion, and other forms of machine intelligence.

A Simple Question That Changed AI Forever

Alan Turing once asked a bold question: Can a machine talk like a human?

This idea felt radical in 1950, because researchers often argued about consciousness, free will, or whether the mind needed a special “spark.” Turing decided to ignore all that and focus on something simple. He wanted to see if a computer could participate in a normal conversation without giving itself away.

How the Turing Test Works

The test uses a conversation game. A human judge speaks to two unseen players. One player is human. The other is a computer. If the judge cannot reliably tell them apart, then the computer “passes.”

This setup seems easy to understand, yet it also reveals a deeper truth. Human conversation requires knowledge, rhythm, memory, and intuition. These features make communication natural, and machines must imitate them to succeed. Turing predicted that machines with modest memory would pass the test by the year 2000. But things didn’t unfold exactly as he expected.

The Turing Test in AI: What the Early Programs Tried to Solve

The Turing test measures whether a computer can respond like a human in a conversation. Early attempts showed clever tricks instead of true intelligence.

The First Chatbots Tried to Act Human in Simple Ways

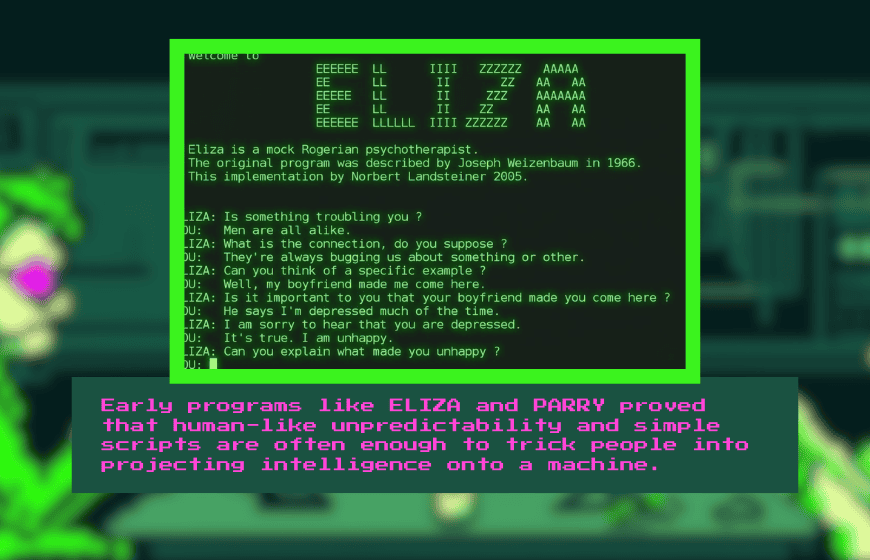

In the 1960s, a program called ELIZA used short scripts to mimic a therapist. It encouraged users to talk by repeating parts of their sentences. Even though it followed simple rules, many people felt heard. That reaction revealed how humans often project intelligence onto anything that seems to “talk back.”

Another program named PARRY used the opposite style. It acted like a paranoid patient who redirected every topic toward its own fears. Judges found the behavior believable because unpredictability can sometimes feel human. These early experiments taught researchers that humans fill in many conversational gaps without realizing it.

Competitions Pushed Chatbots to Use Clever Personas

As the years passed, competitions pushed programmers to test new ideas. Some chatbots focused on narrow topics to hide their limits. For example, one chatbot named Catherine responded well about Bill Clinton but struggled outside that subject. Another chatbot, Eugene Goostman, pretended to be a young Ukrainian teenager. People forgave errors because they assumed cultural differences explained the mistakes.

This approach showed that personality design could help a machine “pass,” even without deep understanding. Yet it also raised questions about what counts as genuine intelligence.

Statistical and Modern Approaches Improved Fluency

Later systems, such as Cleverbot, used large conversation databases. They analyzed previous human text and chose likely responses. This method made some replies feel surprisingly natural. However, the system struggled with consistency. It changed tone quickly, forgot earlier statements, and failed when topics moved beyond its stored conversations.

Modern systems rely on Natural Language Processing and machine learning to expand their range. They recognize patterns, track context, and adjust style. Despite these strengths, many still stumble on simple moments. A single pause like “umm…” or a vague sentence can confuse an AI unless it clearly fits a known pattern.

Why the Test Is Still Hard to Pass Today

Although today’s computers can land spacecraft and assist in surgeries, basic conversation remains tricky. Human language depends on background knowledge, assumptions, and emotional cues. For instance, the sentence “I took the juice out of the fridge and gave it to him, but forgot to check the date” requires understanding storage habits, spoilage, and everyday routines.

Machines often misread these subtle layers. They may respond literally when the moment requires intuition. As a result, many chatbots still fail to maintain long, natural conversations that feel completely human.

Common Misconceptions About the Turing Test

Many believe a machine “passes” once it fools a few people online. However, the original test involved controlled conditions and consistent performance. Another misconception is that the test measures consciousness. It doesn’t. It only measures behavior. The deeper question “Is consciousness necessary for intelligence?” remains open.

Modern Alternatives and New Questions

Because the Turing Test in AI focuses mostly on conversation, researchers now explore other methods. Challenges like the Winograd Schema test logical reasoning. Some tests measure emotional fluency, which has become more important as generative AI grows. These new ideas try to capture abilities that conversation alone cannot reveal.

Where This Leaves Us Today

As AI systems evolve, they inch closer to Turing’s original goal. Yet they also force us to revisit the older questions he set aside. Can an artificial machine really “think”? Does intelligence require neurons, or can it appear in code? These debates continue as generative AI models learn new ways to interact.

Still, the central idea stays the same. If a machine can talk in a natural, intuitive way, then the line between human and computer becomes harder to spot.

FAQs

What is the Turing Test in simple terms?

It is a conversation test where a judge tries to distinguish between a human and a computer based only on their responses.

Has any computer truly passed the Turing Test?

Some systems have fooled people briefly, but consistent long-term passing under strict conditions remains debated.

Did ELIZA really understand what users said?

No. ELIZA followed scripted patterns and reflected user input without genuine comprehension.

Why is human conversation difficult for AI?

Human language relies on context, shared knowledge, emotional nuance, and intuition, which are hard to replicate fully.

Does passing the Turing Test mean a machine is conscious?

No. The test evaluates behavior in conversation, not awareness or subjective experience.

Are there alternatives to the Turing Test?

Yes. Modern benchmarks measure reasoning, logical consistency, and emotional understanding in addition to conversation.

Will AI eventually be indistinguishable from humans?

AI is improving rapidly, but maintaining deep, consistent, human-like conversation remains a significant challenge.