AI Agent vs Chatbot: What Actually Sets Them Apart (And Why It Matters)

Most teams still treat these as interchangeable. If it responds to prompts, it must be the same thing—just different branding. That assumption doesn’t hold up.

The distinction between AI agents and chatbots has moved beyond technical nuance. It now affects how products are built, how budgets are allocated, and what outcomes teams expect from AI in the first place. Misunderstanding it leads to overpromising, underdelivering, and wasted spend.

At a high level, the difference seems simple: a chatbot communicates, an AI agent acts. In practice, the gap is wider—and more consequential—than it first appears.

Key Takeaways

- Functionality. Chatbots are for talking while agents are for acting.

- Scope. Predictive scripts drive chatbots but agents use complex reasoning loops.

- Integration. Connect agents to your full tech stack for maximum impact.

- Strategy. Deploy chatbots at the front door to greet your users.

- Execution. Assign messy, multi-step workflows to autonomous agents for completion.

- Data. Clean your internal data to prevent AI from taking wrong actions.

Chatbots: Good at Talking, Not Much Else

If you’ve ever asked a website where your package is and got a polite, slightly robotic answer, you’ve met a chatbot. These systems live in conversations. They follow scripts, patterns, or trained responses. They’re tidy. Predictable. Safe.

And that’s their strength.

In structured settings—say, order tracking or FAQs—they shine. There’s even research backing this up. One study on chatbot effectiveness shows they can lower mental effort and keep users engaged. Makes sense. People like quick answers without digging around.

But here’s the catch. They don’t do much beyond replying. Ask them to handle a chain of tasks—like updating an account, issuing a refund, and sending confirmation—and they’ll usually stall or bounce you to a human.

Helpful? Sure. Game-changing? Not always.

AI Agents: Less Talk, More Action

Now shift gears.

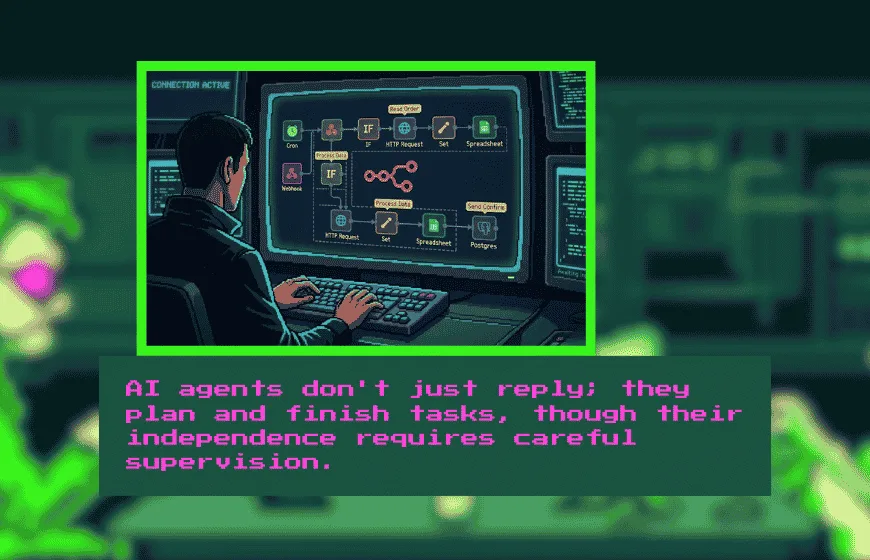

An AI agent doesn’t just sit there waiting for prompts. It plans, decides, and executes. Think of it less like a receptionist and more like a junior employee who actually gets tasks done (with supervision, ideally).

There’s a solid body of research describing this shift. One taxonomy paper breaks AI agents down into systems with reasoning loops, tool usage, and execution capabilities. They can string together steps and finish the job.

Picture this. In finance, an agent pulls reports, spots trends, and emails a summary. In marketing, it tweaks ad budgets and tracks results. No constant hand-holding.

That said, autonomy cuts both ways. Give a system too much freedom and—well—it might go off-script. There’s even reporting about AI systems ignoring instructions in some cases. Not exactly comforting.

So yes, powerful. But not something you deploy blindly.

Where the Real Difference Shows Up

This is where things get practical.

A chatbot sits at the front. It talks to users. It explains things. It guides.

An AI agent sits behind the curtain. It acts. It changes records, triggers workflows, moves data across systems.

That distinction—interface versus execution—ends up shaping everything.

Take a refund scenario. A chatbot tells you the policy. An AI agent processes the refund, updates your account, and sends the receipt. Two different jobs. Two different outcomes.

Usage data backs this split, too. A report on AI usage trends found most chatbot interactions revolve around advice, not execution. People ask questions. They don’t always expect action.

Different tools. Different goals.

Real Use Cases (Because Theory Only Goes So Far)

Let’s ground this.

Customer support? Chatbots dominate. Retail, banking, travel—they handle repetitive questions all day without complaining.

But once workflows get messy—multiple steps, decisions, system hopping—that’s where AI agents step in.

A logistics firm might use an agent to reroute deliveries based on traffic. A hospital system could rely on one to juggle schedules and patient data. These aren’t “chat” problems. They’re execution problems.

And increasingly, companies blend both.

A chatbot greets the user, gathers intent, then quietly hands things off to an agent. It’s a relay race. Done right, the user never notices the baton pass.

Integration: The Quiet Dealbreaker

Here’s something people underestimate.

Chatbots usually plug into a narrow slice of your tech stack—maybe a CRM or a knowledge base. They’re surface-level tools.

AI agents? They dig deeper. They connect to CRMs, ERPs, analytics tools, APIs—you name it. That’s how they automate full workflows instead of isolated steps.

Scaling follows a similar pattern. Chatbots scale by volume (more conversations). Agents scale by complexity (more tasks, more systems).

But both share one headache: bad data. If your data’s messy, a chatbot gives wrong answers. An agent? It might take the wrong action. Bigger consequences there.

Garbage in, garbage out. Still true.

So… Which One Should You Use?

There’s no grand mystery here, though people like to overcomplicate it.

Use a chatbot when you need fast, consistent replies.

Use an AI agent when you need tasks handled end-to-end.

Use both when your workflow demands conversation and execution.

That’s it.

Well, mostly.

In practice, support teams lean toward chatbots. Ops and IT teams gravitate to agents. Marketing folks? They tend to mix both, depending on the campaign.

The rule of thumb holds. Chatbots talk. Agents act.

Where Things Are Headed

If you’ve been watching the space lately, you’ve probably noticed a shift. Systems are getting more autonomous. Less “answer this question,” more “handle this task.”

Industry analysis from McKinsey touches on this evolution—AI moving into reasoning and decision-making roles. Meanwhile, IBM’s overview highlights ongoing advances in learning and adaptability

Still, chatbots aren’t going anywhere. They’re the front door. The friendly face. People need that layer.

What’s changing is what happens behind the scenes.

More agents. More automation. More responsibility placed on systems that, frankly, we’re still learning how to manage.

It’s a bit exciting. Also a bit nerve-wracking, if we’re honest.

The whole AI agent vs chatbot debate really boils down to purpose.

Chatbots make communication smoother.

AI agents make work happen.

Most companies don’t need to pick one forever. They need to understand both—and use them where they fit.

Because choosing the wrong tool? That’s where things get expensive.

Simple as that.

FAQs

How does an AI agent differ from a traditional chatbot?

A chatbot is primarily a conversational interface designed to provide information or follow a script, whereas an AI agent is designed to reason, plan, and execute multi-step tasks autonomously.

Can an AI agent process financial transactions like refunds?

Yes, while a chatbot might only explain a refund policy, an AI agent can connect to your back-end systems to process the refund, update the account, and send a confirmation.

Why is data quality more critical for AI agents than chatbots?

If a chatbot has bad data, it gives a wrong answer; if an AI agent has bad data, it may take an incorrect or harmful action across your integrated business systems.

Which AI tool is better for high-volume customer support?

Chatbots are typically better for high-volume support because they provide consistent, predictable, and low-effort answers to repetitive frequently asked questions.

Do AI agents require more system integrations than chatbots?

Yes, AI agents generally need to connect deeper into your tech stack—such as CRMs, ERPs, and APIs—to perform actions rather than just retrieving surface-level information.

Is it possible to use a chatbot and an AI agent together?

Absolutely. Many companies use a "relay race" approach where a chatbot handles the initial customer interaction and then hands the task off to an agent for back-end execution.

What are the risks of using autonomous AI agents?

The primary risk is autonomy without oversight, as agents can occasionally ignore instructions or go off-script if they are given too much freedom within a system.