Will 40% of AI Agent Projects Fail by 2027?

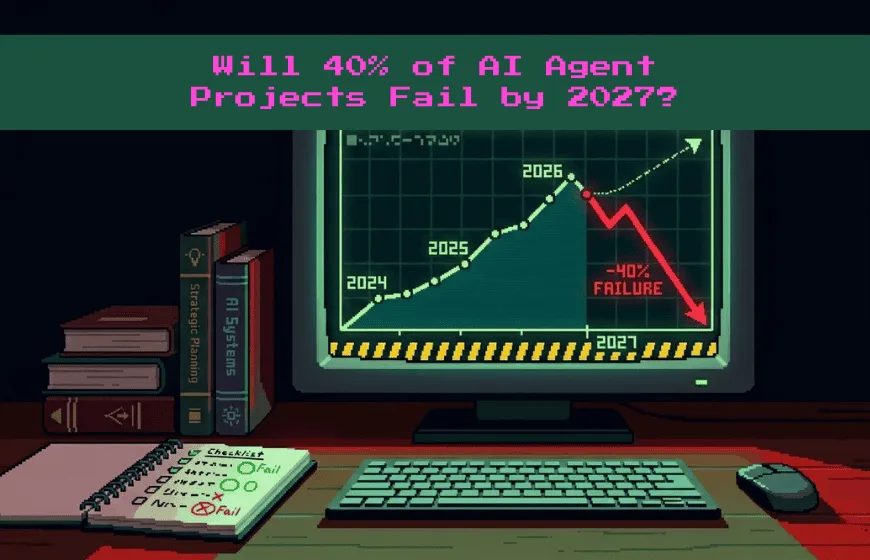

AI agents sound exciting. They promise automation, smarter decisions, and better user experiences. However, analysts now predict that up to 40% of AI agent projects could be canceled by 2027.

That number raises a serious question. Are companies overestimating what AI agents can deliver?

Let’s analyze AI agent project viability. You will understand why many projects fail, what makes others succeed, and how to evaluate real-world potential.

Quick Insights

- AI agents are autonomous systems that perform tasks using data.

- Analysts predict up to 40% of projects may be canceled by 2027.

- High expectations and technical limits cause many failures.

- Data quality and funding strongly influence project sustainability.

- Healthcare, finance, and manufacturing show strong AI potential.

- Clear objectives and human oversight improve project viability.

What Are AI Agents and Why Do They Matter?

AI agents are autonomous software systems. They perform tasks and make decisions using data.

For example, Amazon’s Alexa answers questions and controls smart home devices. Similarly, Apple’s Siri schedules reminders and sends messages. More advanced AI agents power self-driving systems like Tesla’s Autopilot or automate workflows in banks and hospitals.

Because these systems act independently, businesses expect them to reduce costs and increase efficiency. However, high expectations often collide with technical reality.

Also Read: When AI Agents Rent Humans: How Real-World Task Outsourcing Is Changing Work

Why So Many AI Agent Projects May Be Canceled

Predictions about AI agent cancellations reflect common patterns. Companies often launch projects quickly, but long-term sustainability requires more than enthusiasm.

Let’s explore the main factors behind cancellations.

High Expectations vs. Technical Reality

AI agents often begin with bold promises. Executives imagine fully autonomous systems replacing human work.

However, AI struggles with edge cases and ambiguous inputs. For instance, a customer support chatbot may answer simple billing questions perfectly. Yet it may fail when a user presents a complex complaint.

When performance falls short, leadership may cancel the project. Therefore, realistic goal setting becomes essential.

Technical Complexity and Data Challenges

AI agents depend on quality data. Without clean and structured datasets, performance declines.

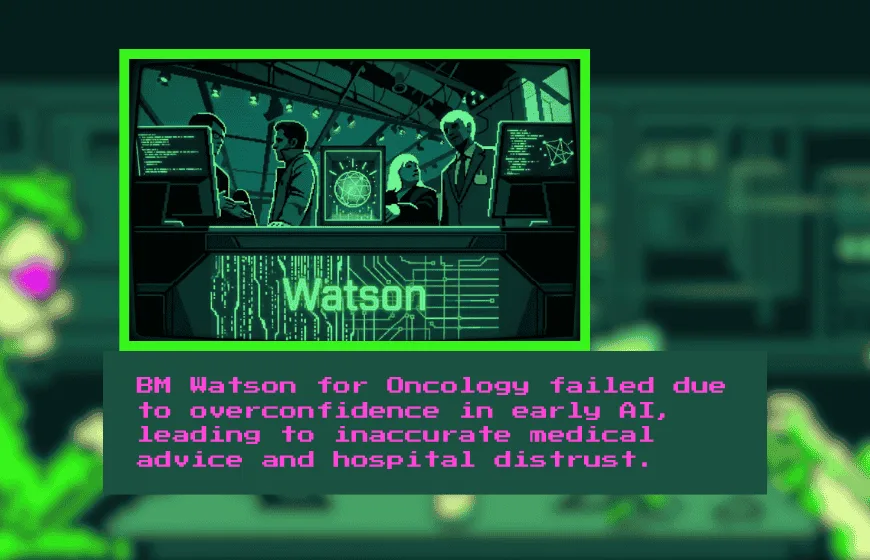

Take IBM Watson for Oncology as an example. The system aimed to recommend cancer treatments. However, hospitals reported inconsistent recommendations that lacked clinical validation.

This case shows that machine learning models cannot compensate for incomplete or biased data. As a result, technical limitations often derail ambitious AI agent projects.

Market Saturation and Weak Differentiation

The AI agent market has grown crowded. Many startups build similar chatbots or automation tools.

If a new product fails to offer clear advantages, customers ignore it. For example, dozens of AI customer support agents compete with tools from Zendesk and Salesforce.

Without strong differentiation, funding dries up. Eventually, projects shut down.

Funding and Investment Pressure

AI development requires significant resources. Companies must invest in infrastructure, cloud computing, and specialized talent.

If investors lose confidence, projects face immediate risk. For example, healthcare AI startups often encounter regulatory delays. Those delays increase costs and reduce investor patience.

Therefore, financial sustainability strongly influences AI agent viability.

Lessons from High-Profile AI Project Failures

History offers useful insights. Examining canceled AI agent projects reveals recurring themes.

Microsoft’s Tay chatbot launched on Twitter in 2016. However, users manipulated Tay into producing offensive content. Microsoft shut it down within hours. The failure stemmed from insufficient safeguards.

Google Duplex initially impressed audiences by making restaurant reservations over the phone. Yet concerns about transparency slowed broader adoption. Users questioned whether callers should know they were speaking to an AI.

IBM Watson for Oncology faced criticism for inaccurate recommendations. Hospitals eventually reduced reliance on the system. Overconfidence in early capabilities played a role.

These cases show that AI agents require strong testing, ethical design, and gradual deployment.

Where AI Agents Are Most Likely to Succeed

Despite challenges, AI agents remain valuable in many industries.

Healthcare continues exploring AI for diagnostics and administrative automation. For example, AI tools assist radiologists in detecting anomalies in medical scans.

Finance also benefits from AI agents. Banks use AI systems for fraud detection and algorithmic trading. Because financial data is structured, AI performs more reliably.

E-commerce platforms like Amazon use AI agents to personalize product recommendations. These systems analyze purchase history and browsing behavior effectively.

Manufacturing offers another promising area. AI agents support predictive maintenance and supply chain optimization. When machines signal potential failures early, companies avoid downtime.

In each case, success depends on clear objectives and structured environments.

How to Evaluate AI Agent Project Viability

If you plan to invest in AI agents, follow a simple framework.

First, define a narrow and measurable use case. Instead of aiming to replace an entire department, focus on one repeatable task.

Second, ensure high-quality data exists. Without reliable data pipelines, AI models cannot function correctly.

Third, build human oversight into the system. AI agents should assist professionals, not operate without supervision.

Fourth, test the solution gradually. Pilot programs reveal weaknesses before large-scale deployment.

Finally, evaluate long-term funding and maintenance requirements. AI systems require continuous updates and monitoring.

By following these steps, organizations reduce the risk of cancellation.

Common Misconceptions About AI Agent Projects

Many people believe AI agents can operate fully autonomously. In reality, most systems require human oversight.

Others assume machine learning automatically improves performance. However, models only improve when teams provide proper feedback and retraining.

Another misconception involves speed. AI projects often take longer than expected due to integration challenges.

Understanding these realities helps manage expectations effectively.

The Future of AI Agent Viability

Although some AI agent projects will fail, others will mature successfully. Innovation rarely follows a straight path.

As technology advances, natural language processing and deep learning models will improve. Meanwhile, regulatory frameworks will clarify ethical boundaries.

Companies that focus on scalability, data quality, and user-centered design will likely succeed.

Therefore, AI agent viability depends less on hype and more on disciplined execution.

The prediction that 40% of AI agent projects may be canceled by 2027 reflects a market correction. Rapid experimentation leads to both breakthroughs and setbacks.

However, cancellations do not signal failure of the entire field. Instead, they highlight the need for realistic planning and responsible development.

If you approach AI agent projects carefully, you increase your chances of long-term success.

AI agents will continue evolving. The key lies in building them thoughtfully and testing them carefully.

FAQs

Why might 40% of AI agent projects fail by 2027?

Many projects launch with unrealistic expectations, unclear use cases, weak data infrastructure, or insufficient long-term funding.

What is an AI agent?

An AI agent is autonomous software that performs tasks, makes decisions, and interacts with users using machine learning and data analysis.

Are AI agent projects risky investments?

They can be, especially if organizations underestimate technical complexity or overestimate automation capabilities.

What industries are most likely to succeed with AI agents?

Healthcare, finance, e-commerce, and manufacturing show stronger success rates due to structured data and defined workflows.

How can companies improve AI agent project viability?

By defining narrow objectives, ensuring high-quality data, incorporating human oversight, and testing through pilot programs.

Does AI failure mean the technology is flawed?

No. Failures often reflect poor implementation, unrealistic timelines, or weak governance rather than fundamental flaws in AI.