LLM Challenges: What Actually Gets in the Way (and What People Do About It)

What is often highlighted about large language models is their apparent capability: code produced in seconds, immediate responses, and systems that operate continuously without rest. This framing emphasizes efficiency and scale.

In practice, however, their limitations become evident. These systems exhibit inconsistencies, produce unreliable outputs, and can behave unpredictably under real-world conditions. The challenges are not theoretical; they emerge quickly, even with limited hands-on use.

Rather than treating deployment as a seamless process, it is more accurate to examine where these systems fail, degrade, or require intervention—and how teams attempt to mitigate those issues while maintaining operational stability.

Key Takeaways

- LLMs sound confident but can be wrong.

- Privacy and compliance are major blockers.

- Costs rise quickly at scale.

- Fixes rely on systems, not just better models.

When “Confident” Doesn’t Mean “Correct”

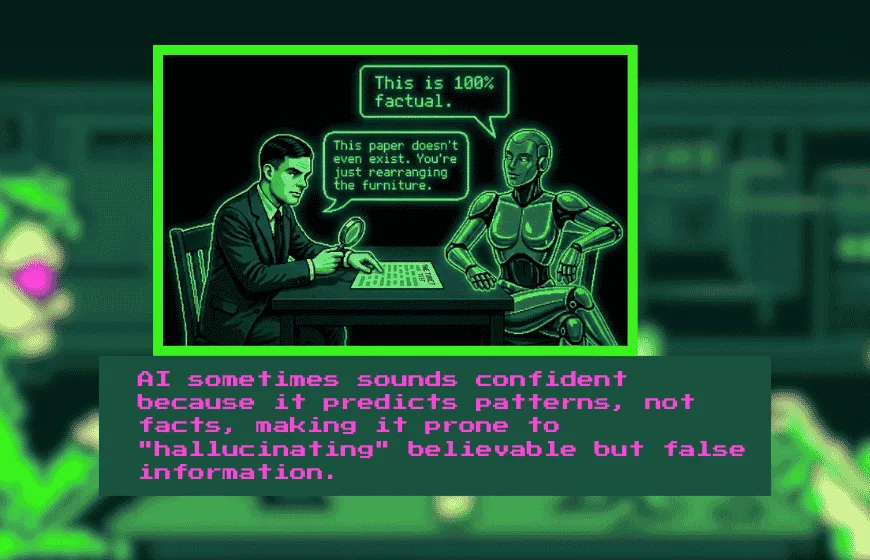

Here’s the uncomfortable part. These models can sound very sure of themselves… even when they’re flat-out wrong.

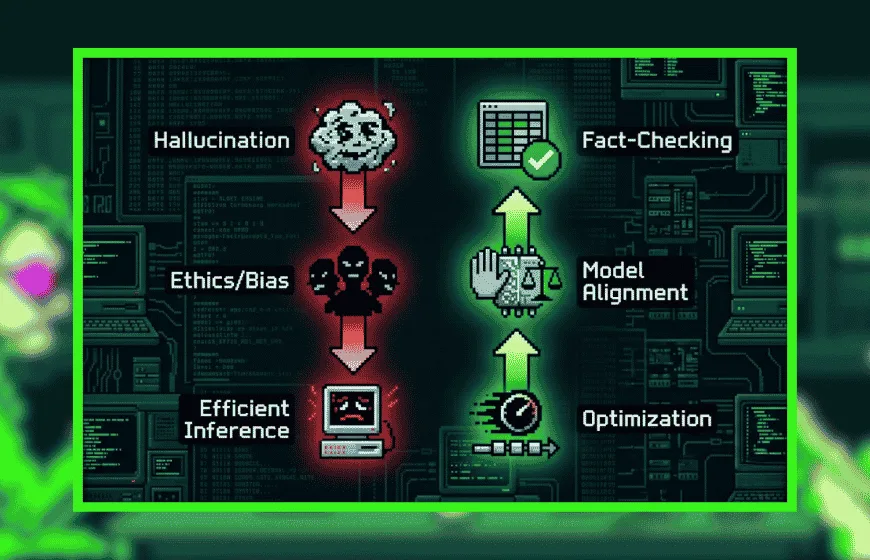

Accuracy is still one of the biggest sticking points in challenges with LLMs. They don’t “know” things the way people do; they guess patterns. Most of the time, it works. Sometimes, it really doesn’t.

You might see a model cite a paper that never existed or casually swap historical dates like it’s rearranging furniture. And in a casual chat, that’s annoying. In a legal or medical context? That’s risky.

Even top-tier systems still stumble on complex reasoning tasks. Not a small issue.

Teams try to manage this by plugging models into external databases (retrieval systems, if you want the formal term) or layering human review on top. It helps. But it also slows things down and adds cost. Trade-offs, always.

Privacy: The Thing That Keeps Legal Teams Awake

Now, if you’ve ever worked inside a company with compliance rules, you already know where this is going.

Data privacy concerns aren’t just a checkbox—they’re a full-on barrier. Especially when sensitive info is involved: medical records, financial data, internal docs. One leak, and things get messy fast.

Regulations like the General Data Protection Regulation (GDPR) don’t leave much room for error. Nor should they.

So what do companies do? They go private. Or semi-private. They lock things down with encryption, restrict API usage, and carefully filter what data even touches the model. It works, more or less, but it’s not plug-and-play. You need planning. And patience. Sometimes more of the second.

The Cost Question Nobody Likes Answering

Let’s be blunt—LLMs aren’t cheap to run at scale.

Sure, testing a prototype feels manageable. But once you’re processing thousands (or millions) of requests? Costs climb. Quickly. That’s where cost efficiency of LLMs becomes a real concern, not just a theoretical one.

Take a support chatbot handling constant traffic. Each interaction adds to compute usage. Over time, it stacks up in a way that can surprise smaller teams.

What helps? A mix of practical tweaks. Smaller models for routine queries. Bigger ones only when needed. Caching repeated responses. It’s not glamorous, but it works. Most of the time.

Integration: Where Good Ideas Slow Down

Here’s something people underestimate—plugging an LLM into an existing system isn’t always smooth.

Actually, it’s often messy.

Legacy systems don’t always play nice. APIs need stitching together. Data pipelines need babysitting. And suddenly, what looked like a quick upgrade turns into a multi-week (or month) project.

Some teams use routing systems—basically smart traffic directors that decide which model handles which request. That helps reduce strain and improves efficiency. But building that kind of setup? It takes skill. And ongoing testing. Lots of it.

Bias and Ethics—Not Just Buzzwords

This one’s tricky because it’s less about code and more about consequences.

LLMs learn from human data. And human data, well, it’s not exactly neutral. Bias creeps in. Sometimes subtly, sometimes not.

A hiring tool, for instance, might favor certain candidates because past data leaned that way. Not intentional. Still a problem.

These biases don’t just disappear on their own. You have to actively look for them.

So teams audit datasets, test outputs across scenarios, and build internal guidelines. It’s ongoing work. Never really “done.”

Different Industries, Different Headaches

What works in one field can fall apart in another. That’s just how it goes.

In healthcare, even a small mistake can be serious. So LLMs there are often limited to safer tasks—summaries, notes, admin stuff.

Finance? Even tighter. Regulations slow everything down, and rightly so.

Retail, on the other hand, leans into user experience. If a chatbot gives weird answers, customers notice. And they leave.

So yes, application of LLMs depends heavily on context. There’s no one-size-fits-all fix here.

Measuring Performance Isn’t as Simple as It Sounds

You’d think accuracy scores would tell the whole story. They don’t.

A model can be technically correct but painfully slow. Or clear, but shallow. Or fast, but confusing. None of those are ideal.

So teams build custom benchmarks—tracking things like latency, response quality, even user satisfaction. It’s more work upfront, but it paints a clearer picture.

And honestly, without that, you’re just guessing.

Keeping Up Feels Like Chasing a Moving Target

LLMs evolve fast. What works today might feel outdated in six months.

That’s why future-proofing matters. Systems need to adapt, not just exist.

Companies that stay ahead tend to invest in continuous updates, retraining, and modular design. According to recent research on large models, fine-tuning over time plays a big role in maintaining performance.

Flexible systems help too—you can swap parts out without tearing everything down. Less risk. More room to experiment.

So, What Actually Helps?

No silver bullet, but a few approaches show up again and again:

- Retrieval systems to cut down hallucinations

- Tight data controls for privacy

- Smart model selection to manage costs

- Routing systems to balance workloads

- Regular monitoring to catch bias and errors early

Companies like OpenAI and Google DeepMind keep refining these ideas in real-world products. Progress is happening, just not in a straight line.

There’s Always a “But”

LLMs are powerful. No argument there.

But they’re not flawless. Accuracy slips, costs rise, systems get complicated, and ethics… well, they don’t solve themselves.

The teams that get the most out of these tools aren’t the ones chasing hype. They’re the ones paying attention—testing, adjusting, questioning, and sometimes stepping back when something doesn’t feel right.

That’s the difference.

FAQs

1. What are the biggest challenges with LLMs?

Accuracy issues, privacy risks, high costs, integration complexity, and bias are the main challenges.

2. Why do LLMs give incorrect answers?

Because they predict patterns rather than verify facts, which leads to hallucinations.

3. How do companies reduce LLM errors?

By using retrieval systems, human review, and continuous monitoring.

4. Why are LLMs expensive to run?

They require significant compute resources, especially at scale with high user demand.

5. What are common solutions for LLM cost optimization?

Using smaller models, caching responses, and routing tasks efficiently.

6. How do privacy concerns affect LLM adoption?

Strict regulations require companies to limit data exposure and secure sensitive information.

7. Is there a perfect solution to LLM limitations?

No, most teams use a combination of tools and strategies to manage trade-offs.