Human Language vs LLM: What Research Actually Says About the Difference

Large language models write fluently. Sometimes they sound indistinguishable from humans.

So what really separates Human language vs LLM output?

The answer becomes clearer when we look at research instead of impressions.

This post breaks down what academic papers, technical reports, and AI researchers actually say about how machine-generated text differs from human communication.

Quick Insights

- Human language relies on lived experience and contextual inference.

- LLM output relies on statistical next-token prediction.

- Academic research warns against equating fluency with understanding.

- Technical reports from OpenAI and Google emphasize architecture and benchmarks, not consciousness.

- LLMs excel in structured reasoning tasks but lack grounded awareness.

- Human communication remains context-rich and socially embedded.

Human Language: Meaning, Context, and Social Experience

Human language does more than transmit information.

It carries shared knowledge, cultural norms, and lived experience.

Linguistics research consistently shows that meaning depends on context. Words change interpretation based on tone, environment, and shared background. For example, the same sentence can express humor, sarcasm, or frustration depending on delivery.

Scholars in pragmatics and sociolinguistics emphasize that humans constantly infer intention. We fill gaps using memory, emotion, and situational awareness.

This layer of interpretation has no direct equivalent in current language models.

What LLM Output Actually Does

Large Language Models generate text through statistical prediction.

According to OpenAI’s GPT-4 Technical Report (2023), the model produces output by predicting the next token based on patterns learned during training. It does not claim understanding or consciousness. Instead, it describes the system as a large-scale predictive model trained on diverse text data.

Similarly, Google DeepMind’s Gemini Technical Report explains that Gemini models optimize performance across reasoning benchmarks using large-scale training and fine-tuning. The report discusses architecture, training scale, and evaluation metrics, not semantic awareness.

In short, LLM output reflects pattern prediction.

It does not reflect internal understanding.

The “Stochastic Parrot” Critique

In 2021, researchers including Emily Bender published the paper “On the Dangers of Stochastic Parrots.”

The paper argues that large language models generate fluent text by recombining patterns from training data. The authors caution that fluency should not be mistaken for comprehension. They describe these systems as statistical pattern generators rather than meaning-based communicators.

This critique directly informs the Human language vs LLM debate.

Human communication involves grounded understanding. LLM output involves probabilistic recombination.

Those are not the same process.

Context and World Models

Another difference appears in contextual grounding.

Research in natural language processing repeatedly shows that LLMs struggle with deep situational reasoning. While they perform well on structured benchmarks, they may fail in edge cases requiring real-world grounding.

OpenAI’s own safety research notes that models can produce “hallucinations,” meaning confident but incorrect statements. This occurs because the system predicts likely text rather than verifying factual reality.

Humans, by contrast, cross-check information using sensory experience and memory.

LLMs simulate context.

Humans inhabit it.

Learning and Adaptation

Human language develops continuously.

Children refine vocabulary through social interaction.

LLMs do not update in real time through conversation. They require retraining or fine-tuning with new datasets. OpenAI and Google both describe training as a structured process involving supervised learning and reinforcement learning.

This distinction affects adaptability.

Humans adjust beliefs during dialogue.

Models adjust only during retraining cycles.

Creativity: Generation vs Origination

LLMs can produce creative writing. They generate poetry, essays, and dialogue. However, technical documentation from OpenAI and Google describes these outputs as pattern-based synthesis.

The systems combine fragments learned during training. They do not create from personal experience.

Human language draws from memory, emotion, and embodied life. That grounding shapes originality in ways statistical systems cannot replicate.

Therefore, when comparing Human language vs LLM, creativity differs at a structural level.

Where LLMs Excel

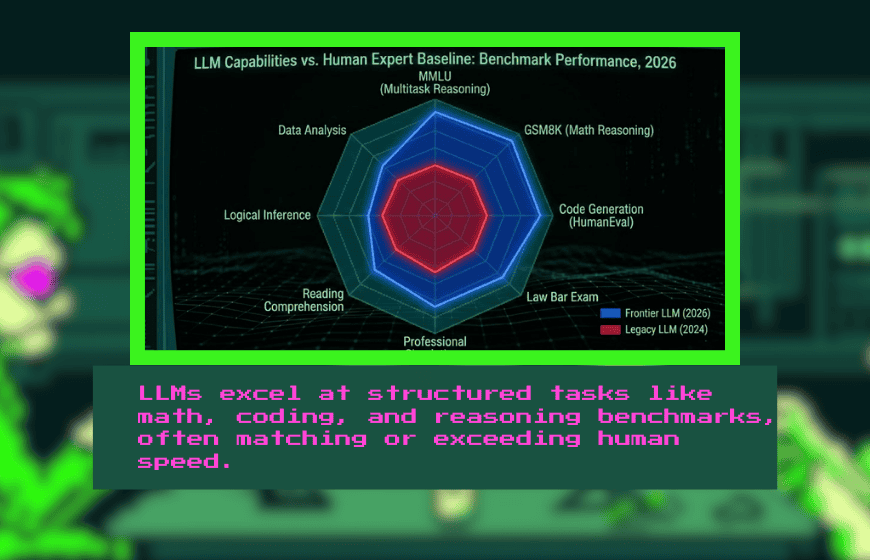

To keep the comparison fair, we should also acknowledge strengths.

LLMs perform strongly on benchmarks such as:

- MMLU (multitask reasoning)

- GSM8K (mathematical reasoning)

- Code generation tasks

Google’s Gemini report highlights competitive reasoning performance. OpenAI’s GPT-4 report describes strong results on professional and academic simulations.

In structured tasks, LLM output can match or exceed human speed.

However, speed does not equal comprehension.

Common Misconception: Fluency Equals Understanding

Fluency can mislead readers.

Because LLM output mirrors human grammar and structure, people often attribute awareness to the system. However, technical reports consistently avoid claims of semantic understanding.

Instead, they describe models as large neural networks trained to optimize next-token prediction.

That difference matters.

Human language operates through lived meaning.

LLM output operates through statistical alignment.

Two Different Systems of Communication

The comparison between Human language vs LLM should not rely on emotion or hype. It should rely on documentation.

Research shows that:

- Humans communicate through grounded, contextual understanding.

- LLMs generate text through probabilistic pattern prediction.

- Fluency does not imply comprehension.

- Benchmarks measure reasoning performance, not awareness.

LLMs remain powerful tools. However, they operate under fundamentally different mechanisms than human communication.

Understanding that distinction helps us use these systems wisely.

FAQs

What is the Turing Test in simple terms?

The Turing Test is an experiment where a human judge talks to both a machine and a person and tries to decide which is human based only on conversation.

Is the Turing Test still relevant today?

Yes. It remains useful for evaluating conversational AI and identifying differences between fluent language generation and deeper understanding.

Has modern AI passed the Turing Test?

Some AI systems perform well in short conversations, but extended dialogue often reveals limitations in context, memory, and consistency.

Is the Turing Test the same as the Human or Not game?

They are conceptually similar. The Human or Not game recreates the imitation game format in a modern online setting.

Does passing the Turing Test prove intelligence?

No. Passing it suggests strong conversational performance but does not prove reasoning depth, awareness, or general intelligence.

What are the limits of the Turing Test?

It focuses on dialogue quality and does not directly measure planning, long-term reasoning, or non-linguistic intelligence.