What Is LLM Grounding? A Complete Guide for AI Practitioners

Large language models (LLMs) have transformed how organizations interact with data—but they come with a fundamental limitation: they generate responses based on patterns learned during training, not real-time understanding. This is where LLM grounding becomes critical.

In simple terms, LLM grounding is the process of connecting a model’s outputs to external, verifiable data sources to improve accuracy, relevance, and trustworthiness.

This guide breaks down what LLM grounding is, how it works, how it differs from fine-tuning, and how to implement it in real-world systems.

What Is LLM Grounding?

LLM grounding refers to augmenting a model’s responses with context from external data sources, rather than relying solely on its pre-trained knowledge.

At a deeper level, grounding ensures that:

- Outputs are tied to real-world, up-to-date information

- Responses are verifiable and auditable

- The model is context-aware for specific use cases

According to Google Cloud, grounding is the ability to connect model output to verifiable sources, reducing the likelihood of hallucinated or fabricated content.

Why this matters

LLMs are limited by their knowledge cutoff, meaning they cannot access information beyond their training data unless augmented with external systems.

Grounding solves this by enabling models to:

- Access real-time or proprietary data

- Provide domain-specific answers

- Maintain factual consistency

Why LLM Grounding Is Essential

1. Reduces Hallucinations

Grounding anchors outputs to factual data, reducing incorrect or fabricated responses.

2. Improves Accuracy and Relevance

By injecting context, models generate outputs tailored to the user’s query and environment.

3. Enables Enterprise Use Cases

Organizations can integrate internal databases, CRM systems, or documentation into AI workflows.

4. Eliminates Constant Retraining

Instead of updating model weights, grounding allows you to update data sources dynamically, saving cost and time.

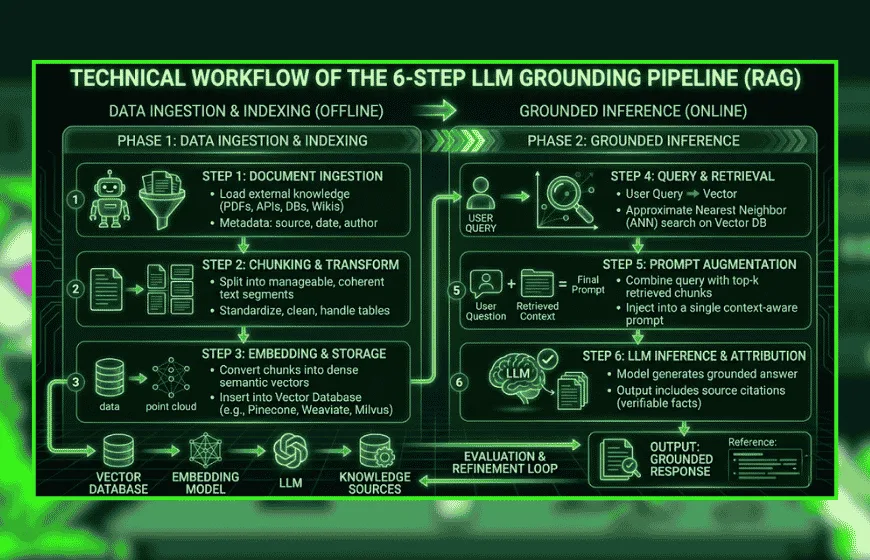

How LLM Grounding Works (Step-by-Step)

At a systems level, grounding typically follows a structured pipeline:

Step 1: Data Collection and Preparation

- Gather structured and unstructured data (documents, APIs, databases)

- Clean and normalize content for indexing

Step 2: Convert Data into Embeddings

Data is transformed into vector representations that capture semantic meaning.

Step 3: Store in a Vector Database

Embeddings are indexed for efficient similarity search.

Step 4: Retrieve Relevant Information

When a query is submitted, the system retrieves the most relevant documents.

Step 5: Augment the Prompt

The retrieved data is inserted into the model’s input context.

Step 6: Generate Grounded Output

The LLM produces a response using both:

- Its internal knowledge

- The retrieved external data

This process is commonly known as retrieval-augmented generation (RAG)—the dominant grounding technique today.

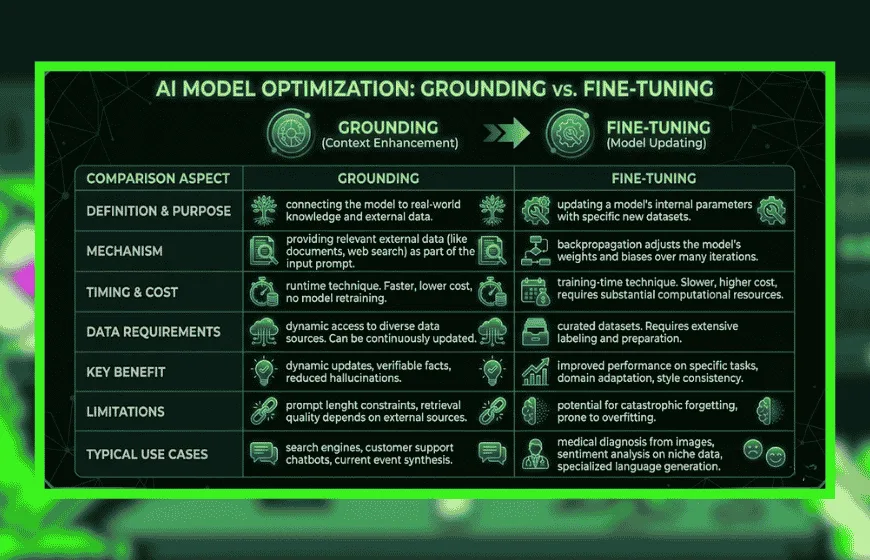

Grounding vs. RAG vs. Fine-Tuning

Understanding these distinctions is critical for implementation decisions.

Grounding vs. RAG

- Grounding = broad concept (any method of anchoring outputs to data)

- RAG = specific technique using retrieval + generation

RAG is essentially the most widely used implementation of grounding.

Grounding vs. Fine-Tuning

Grounding is typically preferred for dynamic environments, while fine-tuning is better for behavioral adaptation.

Common LLM Grounding Methods

1. Retrieval-Augmented Generation (RAG)

The most common approach:

- Retrieves relevant documents

- Injects them into prompts

- Generates context-aware responses

2. Prompt Grounding

Adds structured context directly into prompts (e.g., CRM data, user history).

3. Knowledge Graph Grounding

Uses structured relationships between entities to improve reasoning and traceability.

4. API-Based Grounding

Connects LLMs to real-time APIs (weather, finance, inventory systems).

5. Hybrid Grounding Systems

Combines multiple methods (RAG + APIs + fine-tuning).

Real-World Use Cases for Grounded LLMs

1. Customer Support Automation

Grounded LLMs can:

- Access internal knowledge bases

- Provide accurate, policy-compliant responses

2. Enterprise Search

Employees can query company data conversationally.

3. Healthcare and Legal Applications

Grounding ensures responses are based on:

- Verified medical literature

- Legal documents

4. Personalized Marketing

Grounding enables:

- Customer-specific recommendations

- Context-aware messaging

5. Financial Services

LLMs grounded in real-time market data improve:

- Risk analysis

- Decision-making

Challenges in Implementing LLM Grounding

Despite its benefits, grounding introduces complexity.

1. Data Quality Issues

Grounding is only as good as the data it wretrieves:

- Irrelevant data = poor outputs

- Outdated data = incorrect answers

2. Retrieval Accuracy

Selecting the right documents is critical:

- Too broad → noise

- Too narrow → missing context

3. Latency and Infrastructure

Grounding requires:

- Vector databases

- Retrieval pipelines

- Additional compute

4. Context Window Limitations

LLMs can only process limited input length, restricting how much data can be injected.

5. Residual Hallucinations

Even grounded models can misinterpret retrieved data or combine sources incorrectly.

How to Implement LLM Grounding in Practice (Checklist)

This is where most guides fall short. Below is a practical framework for implementation:

Phase 1: Define Use Case

- What problem are you solving?

- What data sources are required?

Phase 2: Build Data Pipeline

- Collect and clean data

- Segment into chunks

- Generate embeddings

Phase 3: Choose Retrieval Strategy

- Semantic search

- Hybrid search (keyword + vector)

- Reranking models

Phase 4: Design Prompt Templates

- Inject retrieved context

- Add instructions for citation or reasoning

Phase 5: Integrate LLM

- Connect retrieval system to LLM API

- Test different prompt formats

Phase 6: Evaluate Performance

Measure:

- Answer accuracy

- Retrieval precision

- Hallucination rate

Phase 7: Optimize and Iterate

- Improve embeddings

- Refine retrieval logic

- Tune prompt structure

Emerging Trends in LLM Grounding

1. Knowledge Graph + RAG Hybrid Systems

Combining structured reasoning with retrieval for better explainability.

2. Grounding Evaluation Metrics

New research proposes metrics to measure grounding effectiveness and response reliability.

3. Real-Time Grounding

Integration with live data streams (IoT, financial markets, APIs).

4. Multi-Modal Grounding

Grounding across:

- Text

- Images

- Audio

5. Ethical and Responsible AI

Grounding is becoming central to:

- Transparency

- Auditability

- Compliance

LLM grounding is rapidly becoming a foundational component of enterprise AI systems. It bridges the gap between static model knowledge and dynamic, real-world data—unlocking more accurate, reliable, and scalable AI applications.

However, grounding is not just a feature—it’s a system design challenge. Success depends on:

- Data quality

- Retrieval strategy

- Prompt engineering

- Evaluation metrics

Organizations that master grounding will gain a significant competitive advantage in deploying trustworthy AI at scale.

Frequently Asked Questions

1. What is the difference between LLM grounding and RAG?

Grounding is the broad concept of linking AI to facts. RAG is the specific technical method used to retrieve data and add it to the prompt.

2. Is grounding better than fine-tuning for my AI?

Grounding is better for real-time information and lower costs. Fine-tuning is better for changing a model's behavior or specialized language.

3. Does grounding eliminate all AI hallucinations?

No, but it significantly reduces them. Models can still misinterpret the retrieved information or combine different sources incorrectly.

4. How does grounding help with the "knowledge cutoff"?

Grounding allows the AI to search live databases and APIs. This gives the model access to information created after its training ended.

5. What role do vector databases play in grounding?

They store information as mathematical embeddings. This allows the system to find relevant context based on meaning rather than just keywords.

6. Can grounding work with real-time data like stock prices?

Yes, via API-based grounding. This connects the LLM to live data streams for immediate and accurate updates.

7. How does grounding improve enterprise AI safety?

It makes responses auditable and verifiable. By providing citations to internal documents, businesses can trust the AI's output.