What Is the Difference Between Large Language Models and Artificial Intelligence?

Many readers ask: what is the difference between large language models and artificial intelligence. The terms appear in news, apps, and policy debates. They sound similar, but they refer to different layers of the same field.

Artificial intelligence is the broad concept. It covers systems that perform tasks linked to human thinking. Large language models sit inside that field. They focus on language tasks such as writing, answering questions, and translation. This distinction is central to understanding AI vs. LLMs.

Key Takeaways

- AI is the broad field; LLMs are a subset.

- LLMs focus on language, not full intelligence.

- AI includes vision, robotics, and decision systems.

- LLMs generate text—they don’t truly understand.

Artificial Intelligence Is a Broad Field with Deep Roots

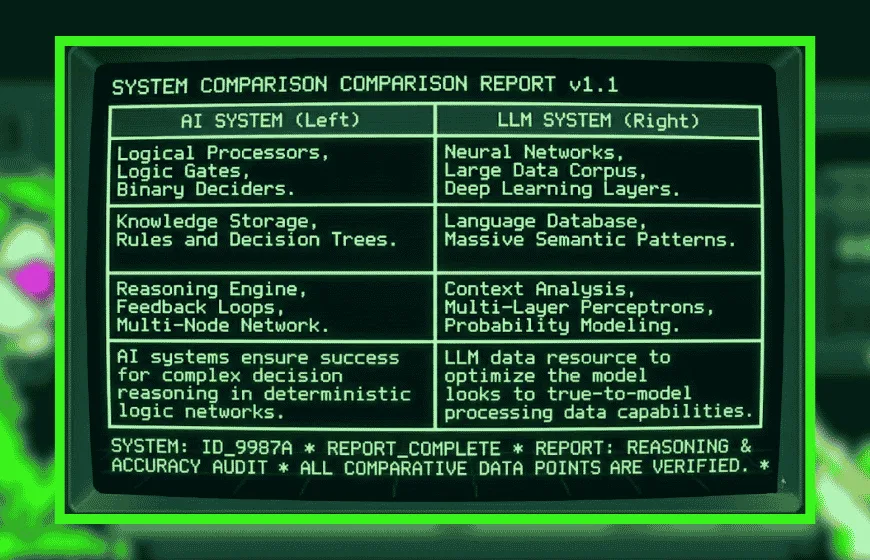

Artificial intelligence describes systems that learn patterns, make decisions, and solve problems. It includes several branches, such as machine learning, robotics, and computer vision. These systems process more than text. They use images, numbers, and signals.

The field has a long history. In the 1950s, researchers like John McCarthy introduced the term “artificial intelligence.” Early systems relied on rules. Later, advances in data and computing power expanded what AI could do.

Today, AI supports many real-world systems. Self-driving cars detect objects and plan routes. Banks use AI to flag fraud. These systems do not depend on language alone. They operate across multiple data types.

Large Language Models Focus on Text and Communication

Large language models are a specific type of AI. They work with human language through natural language processing. These models train on large datasets, including books, articles, and online text.

Organizations such as OpenAI have developed systems that can write essays and answer questions. These models predict words based on patterns. They do not “understand” in a human sense.

Common uses include chatbots, translation tools, and writing assistants. These systems handle text well. However, they do not control machines or interpret images unless paired with other AI tools.

Scope Defines the Difference Between Large Language Models and Artificial Intelligence

The difference between large language models and artificial intelligence comes down to scope. AI is the full domain. Large language models are one part of it.

A simple analogy helps. AI is a toolkit. Large language models are one tool inside it. Other tools handle vision, speech, or decision-making.

This difference appears in complex systems. A virtual assistant uses AI to manage tasks and process voice. Inside it, a language model handles conversation. On its own, the model cannot act beyond text.

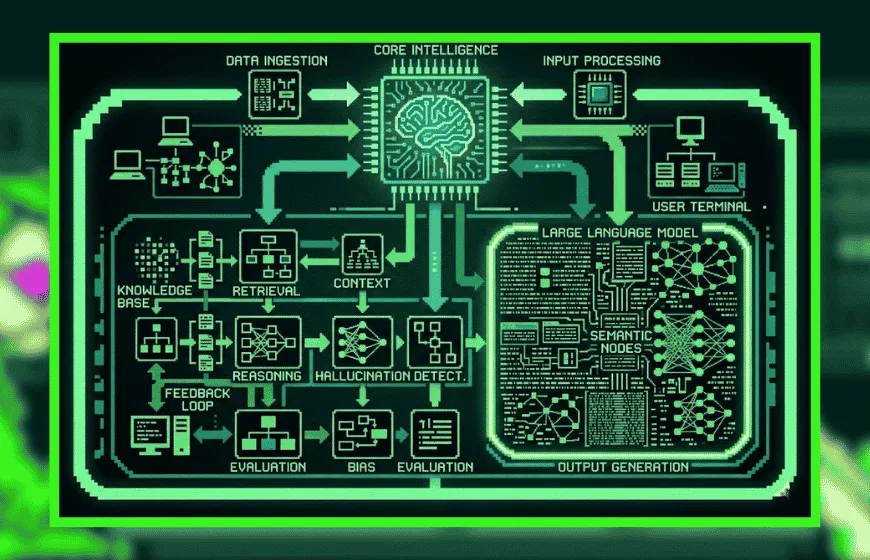

The diagram illustrates that a Large Language Model (magenta region) is a specialized sub-component contained within a larger Artificial Intelligence system (outer blue boundary). The AI handles external inputs and high-level logic, while using the embedded LLM as a specialized semantic processor to understand context and synthesize complex data.

Real-World Use Cases Show Clear Roles

Daily examples clarify AI vs. LLMs. AI operates in many systems. Large language models support communication tasks.

A navigation app uses AI to predict traffic and plan routes. It processes location data and maps. A chatbot uses a language model to respond in text.

In education, AI systems track progress and grade work. Language models assist with writing and explanations. The functions overlap, but they remain distinct.

Limits Reveal Practical Boundaries

Both AI and large language models have limits. These limits shape how they should be used.

Large language models can produce fluent text, yet they may be incorrect. They rely on patterns, not verified knowledge. Without external tools, they cannot confirm facts in real time.

AI systems face wider challenges. They require large datasets and careful design. They may fail in unfamiliar cases or reflect bias in training data. Research from Stanford HAI highlights gaps in reasoning and fairness.

These limits show that human oversight remains necessary.

Misconceptions About Consciousness Persist

Public debate often links AI with human-like thinking. This leads to confusion about consciousness in AI.

Large language models can sound human. This creates the impression of awareness. In reality, they generate patterns, not meaning.

Institutions such as Alan Turing Institute stress that current AI lacks consciousness. It has no intent, emotion, or self-awareness.

Clarifying this point helps define AI as a tool, not an independent mind.

Historical Development Explains the Rise of LLMs

AI evolved in stages. Early systems followed fixed rules. They could not adapt.

Machine learning changed that model. Systems began to learn from data. This improved performance in vision and speech tasks.

Large language models emerged later. Growth in computing power enabled training on massive datasets. This led to strong gains in language tasks.

Today, LLMs represent a visible part of AI. They reflect progress, but they remain one component of a larger system.

Future Trends Point to Integration

Current trends suggest deeper integration between AI systems. Large language models will likely combine with other tools.

Future systems may link language with vision and action. For example, an assistant could describe an image and respond to it. This requires multiple AI components working together.

Industry analysts, including Gartner, expect continued growth in AI adoption. Healthcare, education, and business will expand usage. At the same time, developers aim to improve accuracy and reduce bias.

Clear Scope, Clear Use

The question what is the difference between large language models and artificial intelligence has a direct answer. Artificial intelligence is the broad field. Large language models are a specialized subset focused on text.

Understanding this difference clarifies modern technology. AI powers diverse systems. LLMs handle communication. They work together, but they are not the same.

Clear definitions lead to better use. This matters as AI becomes more common in daily life.

FAQs

1. What is the difference between AI and LLMs?

AI is the broader field, while LLMs are a specific type of AI focused on language tasks.

2. Are large language models considered AI?

Yes, they are a subset of AI designed for text-based tasks.

3. What can AI do that LLMs cannot?

AI can process images, control machines, and make decisions beyond language tasks.

4. Do LLMs understand language like humans?

No, they predict patterns in text rather than truly understanding meaning.

5. What are common uses of LLMs?

Chatbots, writing tools, translation, and question-answering systems.

6. Is machine learning the same as LLMs?

No, machine learning is a broader method, while LLMs are a specific application within it.

7. Will LLMs replace other types of AI?

No, they will likely integrate with other AI systems rather than replace them.