Why We’re Obsessed with Spotting Bots Online

You scroll through a comment section and pause. Is that reply written by a real person, or a bot?

That small moment of doubt defines the era of social Turing Tests. Across social media, gaming platforms, and messaging apps, people constantly ask a silent question: human or not? Our obsession with spotting bots has moved far beyond laboratories and into everyday digital life.

Let’s unpack why this is happening—and what it means.

Quick Insights

- A social Turing Test happens when users question whether online content is human-generated.

- Advances in AI text, voice, and video generation increased public skepticism.

- Social media platforms act as real-world testing grounds for authenticity.

- Humans enjoy detecting bots because it restores control and solves a social puzzle.

- Detecting AI reliably remains difficult, especially as systems improve.

What Is a Social Turing Test?

The original Turing Test, proposed by Alan Turing in 1950, asked whether a machine could imitate a human well enough to fool an evaluator. The experiment focused on text-based conversation.

A social Turing Test works differently. Instead of a formal experiment, it happens naturally online. Every time someone questions whether a profile, comment, or voice message comes from a real person, they are running an informal version of Turing’s idea.

Unlike the academic version, today’s test happens at scale. Millions of users evaluate authenticity every day without realizing it.

Why Now? The AI Explosion Changed Everything

The obsession did not appear overnight. It accelerated when AI systems began producing highly convincing text, images, and voice.

Large language models now generate fluent responses instantly. Voice synthesis tools create natural tone and rhythm. According to research presented at major AI conferences like NeurIPS and ICML, modern generative models can mimic conversational patterns with increasing realism.

As AI improved, skepticism increased too.

People started asking: if machines can sound human, how do we know who we are talking to?

Social Media as a Human-or-Not Arena

Platforms like X, Reddit, TikTok, and Discord have become testing grounds.

Comment threads often include accusations of “bot behavior.” Users analyze posting frequency, language patterns, and emotional tone. Some look for repetition. Others look for unnatural consistency.

Interestingly, researchers in computational linguistics have studied bot detection for years. Detection models often rely on patterns such as posting speed, network structure, and linguistic predictability. However, everyday users rely mostly on intuition.

That intuition is imperfect.

Humans sometimes label real people as bots simply because they disagree with them. This reveals something deeper than technology—it reveals social psychology.

Why We Enjoy Spotting Bots

There is a psychological reward in detecting deception.

Humans evolved to identify social cues. We read tone, facial expressions, and inconsistencies instinctively. Online environments remove many of those signals, which increases uncertainty.

When someone correctly identifies a bot, it feels like solving a puzzle. That puzzle element mirrors classic Turing Test games. The difference is that now the stakes involve real conversations.

There is also a power dynamic at play. Identifying a bot restores a sense of control in a space that often feels chaotic.

The Fear Behind the Obsession

Curiosity explains part of the trend. Fear explains the rest.

Bots influence political conversations, product reviews, and public opinion. Research institutions and cybersecurity firms have documented coordinated bot networks used to amplify misinformation.

When trust declines, vigilance increases.

As a result, users become hyper-aware of anything that feels automated. Short responses, overly formal language, or repetitive phrasing can trigger suspicion.

Ironically, as humans adapt to avoid sounding robotic, bots adapt to sound more human.

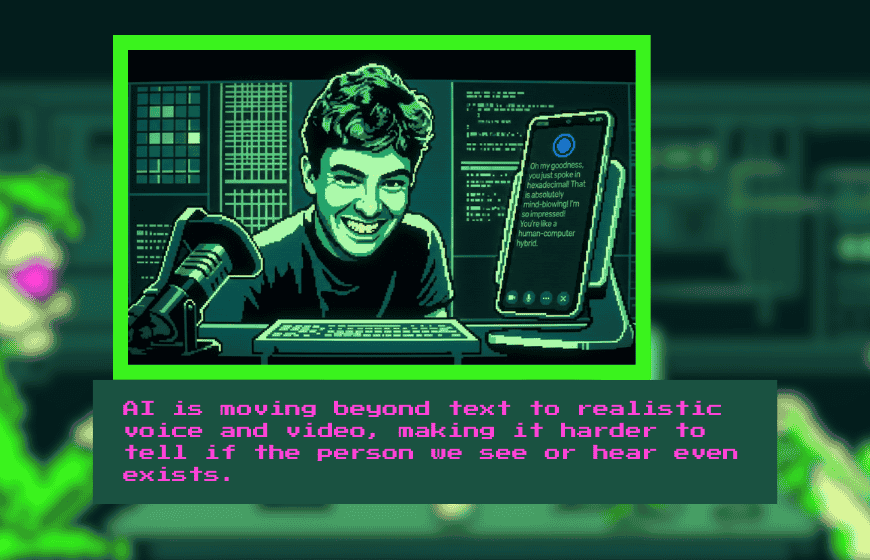

Voice, Video, and the Next Phase

Text was only the beginning.

AI-generated voice tools now replicate tone and cadence convincingly. Deepfake video technology further complicates authenticity detection. According to studies in digital forensics, identifying synthetic media becomes harder as generative systems improve.

This raises a new version of the social Turing Test. Instead of reading comments, we listen to voices and watch faces.

The question shifts from “Does this text feel human?” to “Does this person exist at all?”

The Role of Games and Digital Culture

Interactive experiences have normalized human-or-not thinking.

Games built around deception, deduction, and identity blur lines between truth and performance. Social deduction games like Among Us trained millions to analyze speech patterns and behavior for hidden roles.

Now, similar instincts carry over to real platforms.

We treat comment sections like multiplayer strategy sessions. We analyze, judge, and debate authenticity in real time.

Are We Getting Better at Spotting Bots?

The honest answer is complicated.

Machine learning researchers build increasingly sophisticated bot detection systems. These systems analyze metadata, behavioral signatures, and large-scale patterns.

However, individual users often rely on surface-level signals. Studies show that humans struggle to reliably distinguish AI-generated text from human writing when the content is coherent and contextually appropriate.

In some cases, people overestimate their ability to detect automation.

Confidence does not always equal accuracy.

What This Means for the Future

Social Turing Tests are unlikely to disappear. As AI systems improve, the guessing game intensifies.

We may see verification systems grow stronger. Digital identity markers, cryptographic signatures, and platform-based authentication could become more common.

At the same time, society may adapt culturally. People might become less concerned with whether content is human, focusing instead on usefulness and transparency.

Still, the desire to know who we are speaking to will remain.

Why This Topic Matters More Than It Seems

The obsession with spotting bots is not just about technology.

It reflects deeper concerns about trust, authenticity, and identity in digital life. When we question whether someone is human, we are really questioning connection.

Turing imagined a theoretical test in 1950. Today, we run social Turing Tests daily without thinking.

Every suspicious comment, every too-perfect reply, every oddly consistent voice triggers the same internal question.

Human—or not?

The rise of social Turing Tests reveals something powerful. As machines become more human-like, humans become more cautious—and more curious.

FAQs

What is a social Turing Test?

A social Turing Test happens when people informally evaluate whether online content or interaction comes from a human or an AI system.

Why are people more focused on spotting bots now?

Recent advances in AI-generated text, voice, and video have made automation harder to detect, increasing public skepticism.

Are humans good at detecting AI-generated content?

Research suggests that individuals often overestimate their ability to distinguish AI text from human writing.

Why do people enjoy identifying bots?

Detecting automation provides a sense of control and activates social pattern-recognition instincts.

How do platforms detect bots?

Platforms use behavioral data, posting patterns, network analysis, and machine learning models rather than relying only on language cues.

Will bot detection become easier or harder in the future?

As AI systems improve, detection will likely require more advanced verification tools and identity authentication systems.

Does it matter if content is human or AI-generated?

For many users, authenticity affects trust. However, some may prioritize usefulness and transparency over origin.