Top 5 Games Like Human or Not to Test Your Intuition

If you have played HumanOrNot, you already know the feeling. You are mid-conversation. Everything seems normal. Maybe even friendly. Then the result appears, and you were completely wrong.

That moment sticks.

Here is the thing: in 2026, it hits harder because AI responses have become smoother, faster, and less predictable in obvious ways. Reddit players keep calling it “confidence erosion.” That label fits. The more you play, the less certain you feel about your own judgment.

I used to assume I could always tell when something felt “off,” but a few rounds in, that certainty disappeared.

Below are five games that recreate that same tension. Each one tests a slightly different instinct.

Quick Insights

- HumanOrNot-style games create “confidence erosion” by repeatedly challenging your assumptions

- The concept traces back to the 1950 Turing Test but is now widely accessible through browser games

- QuillBot focuses on visual detection, while TuringTest.live emphasizes logical consistency in conversation

- AI Impostor highlights how AI blends into chaotic human environments like Reddit threads

- SpyParty proves detection is not just about language but also behavior and movement patterns

- CivAI reveals how emotional conversations can feel real yet follow overly structured arcs

- These games improve awareness through rapid feedback, not guaranteed accuracy

The Idea Started Long Before These Games Existed

This concept is not new. It goes back to 1950.

Alan Turing introduced a simple but powerful test in his paper “Computing Machinery and Intelligence.” He avoided philosophical debates. Instead, he asked a practical question: if a human cannot reliably tell whether they are talking to a machine or a person, does the distinction matter?

That became the Turing Test.

For decades, it stayed inside labs. Early systems like ELIZA in the 1960s gave people a glimpse, but they broke quickly under pressure. Ask a few deeper questions, and the illusion collapsed.

Also Read: The First AI Chatbot in History: ELIZA at MIT (1966)

What changed in the 2020s was scale. Language models trained on massive datasets started producing responses that felt natural enough to pass casual conversation.

Now the test is not theoretical. It is playable.

Top Games That Mess With Your Confidence First

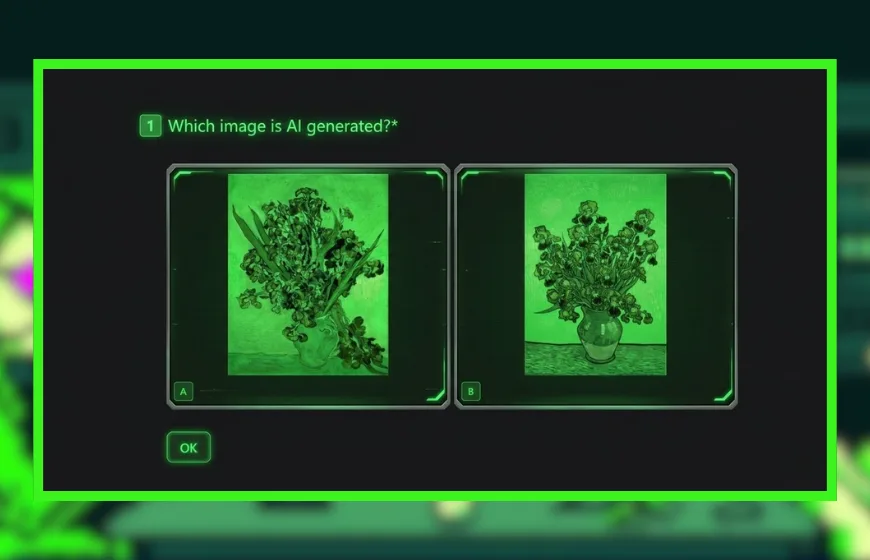

1. QuillBot’s Human or AI Game: Visual Detection Instead of Chat

Not all detection happens through text.

QuillBot’s Human or AI Game flips the format. You look at images and decide whether they are real or generated. Simple setup. Hard execution.

In 2026, the obvious clues are gone. Extra fingers are rare. Distortions are subtle.

The real tells are quieter:

- Lighting that does not match across the scene

- Skin textures that look slightly over-smoothed

- Reflections in the eyes that do not align

- Background depth that feels just a bit flat

This is slower. More analytical.

I actually love that it forces you to pause instead of react. You start noticing how light behaves in real photos, which is not something most people consciously track.

2. TuringTest.live: Where Conversations Turn Into Interrogation

This one feels different immediately.

TuringTest.live is less casual. It feels like a structured challenge. You are not chatting for fun. You are testing for cracks.

Players often set traps. They introduce contradictions. They check memory consistency. They look for logical slips.

That changes your mindset.

AI responses tend to stay clean. Almost too clean. Humans drift. They contradict themselves. They forget details.

One player described it as “spotting over-optimization.” That phrase sticks because it captures the difference perfectly.

To be honest, it can get tiring. You are constantly analyzing instead of just talking.

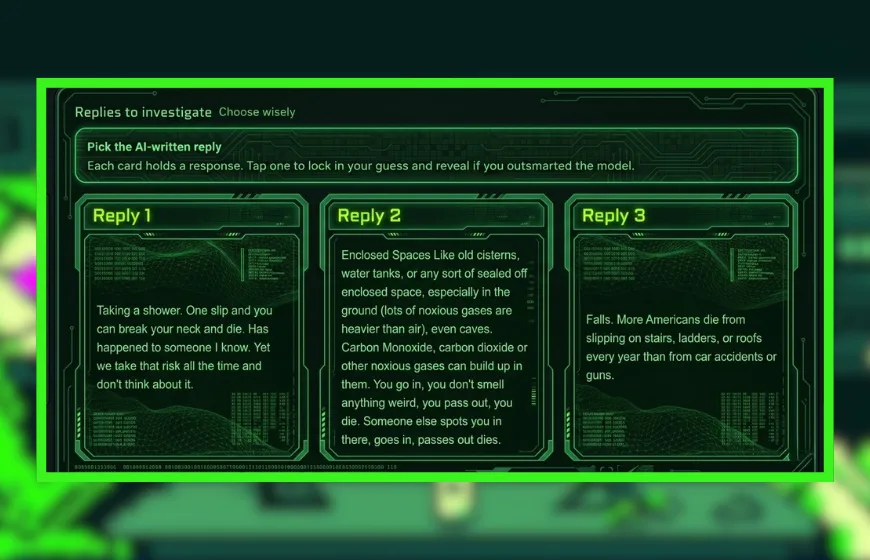

3. AI Impostor: Chaos Makes It Harder, Not Easier

This game sounds simple at first.

You get a set of Reddit-style comments. You pick which one is AI-generated.

Easy, right? Not really.

Real Reddit threads are messy. People ramble. They jump topics. They mix sarcasm with sincerity in the same sentence.

AI tends to be smoother. Slightly more organized. That is the problem.

The hardest rounds are emotional threads. The AI blends in by being just a bit too balanced. Not robotic. Just cleaner than the chaos around it.

I am not sold on the idea that “better writing” always signals intelligence here. Sometimes it is the opposite.

4. SpyParty: Forget Words, Watch Movement

This one drops language entirely.

In SpyParty, one player hides among AI-controlled characters. The other tries to identify the human.

Everything comes down to movement.

Beginners get caught because they act naturally. That sounds counterintuitive, but it makes sense. Humans hesitate. They adjust mid-step. They overcorrect.

AI characters follow patterns. Their timing is consistent. Their paths are predictable.

To blend in, you have to act less human. That is the twist.

It turns detection into something physical. Rhythm replaces language.

5. CivAI “We Need to Talk”: Emotional Patterns Under Pressure

This one leans into emotion.

CivAI’s “We Need to Talk” puts you in tense conversations with AI-driven characters. Arguments. Conflict. Emotional exchanges.

It can feel real. Uncomfortably real.

But listen closely.

AI-driven conversations often follow clean arcs. Conflict rises. It resolves neatly. The structure is almost too balanced.

Humans are messier. They interrupt themselves. They loop back. They derail their own arguments.

Anyway, this game is less about spotting mistakes and more about sensing emotional symmetry versus emotional chaos.

Why These Games Feel Addictive

There is a clear psychological loop at work.

You make a prediction. You get instant feedback. You adjust.

That loop matters because of something simple: prediction error. When you guess wrong, your brain updates its model. When you guess right, it reinforces the pattern.

Fast feedback accelerates learning.

Also, the stakes feel modern. Online, you can no longer assume the other side is human. These games simulate that uncertainty in a safe space.

It feels like play. It is actually training.

Why This Matters More Than It Seems

At a surface level, these are just games. You chat. You observe. You guess.

But the real value is calibration.

Each round forces you to question your assumptions. You might trust polished writing too much. Or distrust it unfairly. You might rely on emotional tone. Then get fooled anyway.

That friction is useful.

The internet changed. The default assumption of “there is a human behind this” no longer holds.

These games do not create that shift. They expose it.

You will not become perfect at detecting AI. No one will. The systems will keep improving.

But perfection is not the goal.

Awareness is.

FAQs

What are the best games like Human or Not?

HumanOrNot.so, TuringTest.live, AI Impostor, SpyParty, and CivAI offer similar AI detection or behavioral judgment mechanics.

Is Human or Not still active in 2026?

Yes, HumanOrNot.so remains active and continues evolving as AI models improve.

How do you detect AI in chat games?

Players often look for overly structured responses, emotional stability, or symmetrical argument patterns.

Are AI detection games accurate?

They provide practice and awareness, but no method guarantees perfect detection as AI models improve.

What makes SpyParty similar to Human or Not?

Both rely on identifying subtle patterns, though SpyParty focuses on movement behavior rather than text.

Why do these games feel psychologically intense?

They challenge confidence in pattern recognition and provide immediate feedback when predictions fail.

Do AI detection skills improve over time?

Yes, repeated exposure sharpens awareness, though AI systems also continue to improve