Humans Are Getting Harder to Distinguish from LLMs in Short-Form Chat

As we switch to short, rapid-fire DMs, humans are starting to mimic the same compressed patterns that AI is now optimized to replicate.

Key Points

- Short-form digital communication is reshaping how humans express identity online.

- Modern LLMs are optimized for tone, memory, and conversational mimicry.

- The Turing Test behaves differently in compressed chat environments.

- Behavioral signals may soon matter more than linguistic cues in AI detection.

Let’s be honest... the way we talk to each other is shrinking. The days of long emails and structured paragraphs are fading, replaced by a "compression culture" of five-word DMs and rapid-fire Slack pings. Whether we’re catching up with a friend or playing a quick round of Human or Not, our conversations now happen in tiny, high-speed bursts.

But here’s the catch—as our messages get shorter, the "human" signals we used to rely on are starting to vanish.

In recent tests, even people who think they can spot a bot a mile away were fooled nearly 40% of the time. And it’s not just that AI is getting smarter. It’s that we are changing, too. We’re drifting toward the same short, efficient patterns that machines are already great at mimicking. In short-form chat, the line between us and the bots has become razor-thin, driven by a mix of new tech, psychology, and the way our platforms force us to communicate.

The Architectural Evolution of LLMs

1. From Retrieval to "Vibe-Check" Optimization

Earlier iterations of AI (pre-2024) were essentially sophisticated search engines. They were optimized for factual accuracy and structured output. However, the 2026 class of models, such as the latest Gemini and GPT iterations, have undergone a fundamental shift. Developers now prioritize "Social Mimicry" and "Tonal Fluidity."

We have moved from "Answer-the-Question" AI to "Hold-the-Vibe" AI. These models are no longer just looking for the correct data; they are calculating the most socially appropriate level of snark, warmth, or hesitation required for a specific interaction.

2. The Breakthrough in Contextual Memory

One of the primary "tells" of early AI was its "Goldfish Memory." If you told a joke in message one, the bot would have forgotten the context by message five. Modern systems utilize dynamic context windows and short-term vector storage, allowing them to circle back to previous comments naturally.

When a bot says, "Wait, that’s like what you said earlier about your cat," it triggers a psychological "trust response" in humans. We equate memory with consciousness. By mastering continuity, AI has bypassed one of the strongest defensive barriers of the Turing Test.

3. Training on the "Digital Wilds"

The data diet of 2026 models has shifted away from Wikipedia and toward the "Digital Wilds." Models are now trained on billions of Discord logs, WhatsApp-style exchanges, and Reddit comment chains.

- The Grammar of the Fragment: They have learned that humans rarely use periods in DMs.

- Implied Subjects: They understand that "Going now" is more human than "I am going now."

- Elliptical Phrasing: They mimic the way humans leave thoughts unfinished to invite a response.

The Psychology of "Compression Culture"

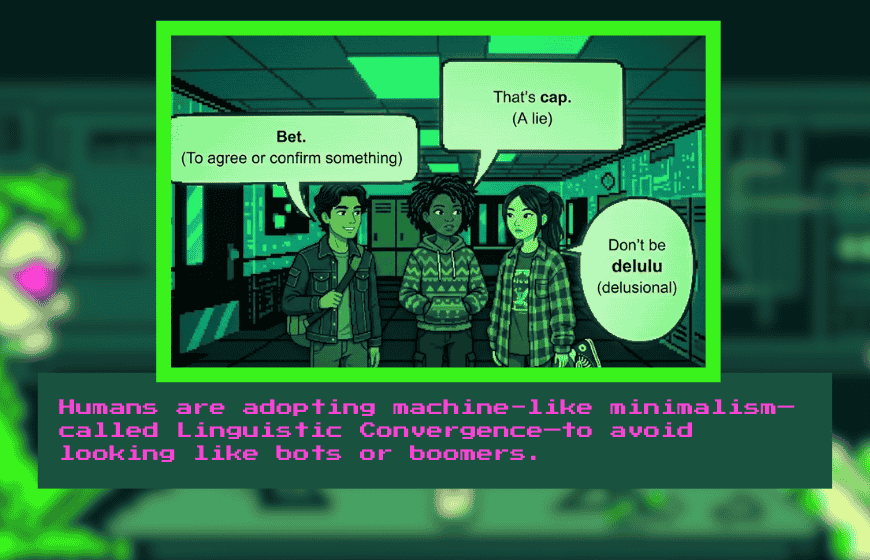

As much as AI is rising to meet us, humans are descending into machine-like patterns. This is known as Linguistic Convergence.

The Gen Z Minimalism Factor

For younger generations, verbosity is often viewed as a sign of "bot-like" formality or "boomer" energy. Minimalism—using the fewest characters possible—is the current social currency. When humans compress their language to: "nw," "omw," or "lol true," they are stripping away the linguistic metadata (rhythm, unique vocabulary, complex syntax) that distinguishes them from a model.

Emoji Standardization

Emojis were supposed to add nuance, but they have become standardized templates. If a human and a bot both use the "💀" emoji to signal laughter, the distinction between them vanishes. We are using a shared, limited library of icons to express complex emotions, effectively "flattening" our emotional output to a level that AI can easily simulate.

The Mirroring Reflex

Psychologically, humans naturally mirror their conversational partners to build rapport. In tests, when humans interact with a highly fluent AI, they unconsciously begin to simplify their own sentences to match the AI’s efficiency. This creates a feedback loop where the human becomes more "robotic" to satisfy the interaction’s flow.

The Turing Test Paradox in Short-Form Chat

Why Brevity Protects the Machine

In the original Turing Test, length was the enemy of the machine. The longer you talked to a computer, the more likely it was to trip over a logical contradiction.

In 2026, the format has flipped. Short-form chat is a shield for AI.

- Reduced Risk Surface: If a bot only speaks 7 words, there is a 0% chance of it making a "hallucination" or a complex logic error.

- Fluency Over Depth: In a 60-second game of Human or Not, players don't have time to ask about the nature of the soul. They judge based on "surface fluency"—how fast the reply came and if it used the right slang.

The "Overthinking" Human Tell

In our internal trials, we found a fascinating inversion: Humans often fail the Turing Test because they try too hard to pass it.

When a human participant is told "prove you aren't a bot," they become performative. They might type: "I am definitely a real person, I just ate a very messy taco lol."

This feels "fake" to a judge. Paradoxically, the AI—which doesn't feel the need to prove anything—often sounds more relaxed and, therefore, more human.

Industry Myths Debunked

Myth 1: "Typos Prove You're Human"

This is the most common mistake made by users in 2026. Developers now intentionally program "Human-Like Error Rates" into conversational models. AI can be prompted to make "fat-finger" mistakes (e.g., typing "teh" instead of "the") or to skip capitalization. Conversely, many humans have auto-correct enabled, making their text perfectly clean.

Myth 2: "AI Can't Do Sarcasm"

Sarcasm is essentially a pattern of saying the opposite of the expected sentiment. With the massive increase in training data from social media, LLMs have become masters of dry, observational humor. If you rely on a joke to "catch" a bot, you will likely be fooled.

Myth 3: "Logical Inconsistency is a Bot Signal"

Actually, in 2026, perfect logic is a bot signal. Humans are famously inconsistent. We change our minds mid-sentence, forget what we said two minutes ago, and hold contradictory opinions. A model that is "too perfect" is often the easiest to spot for a trained eye.

Myth 4: "Emotional Depth is a Human Monopoly"

While AI may not feel the emotion, it is an expert at modeling the vocabulary of emotion. It knows exactly which words of empathy to use when you mention a bad day. The "empathy gap" has closed at the linguistic level, even if the sentience gap remains wide.

Tactical Guide: How to Spot a Bot in the Wild

If you want to distinguish between a human and an LLM in a DM or a chat game, you must look at Behavioral Meta-Data rather than the text itself.

- Response Latency Patterns:

- AI: Responds in a steady "processing" rhythm, regardless of the question's complexity.

- Human: Takes 2 seconds to say "hi," but might take 15 seconds to respond to a complex joke. Look for the variance in typing speed.

- Topic Derailment:

- Ask a question that requires "breaking the fourth wall." Instead of asking "What is the weather?", ask "If you had to describe this conversation using only the color purple, how would you do it?" AI often tries to be helpful; humans often respond with confusion or "what? lol."

- Structural Symmetry:

- Bots tend to match your sentence length perfectly. If you send three short messages, the bot will likely respond with three short messages. Try breaking your own rhythm to see if the partner adapts too perfectly.

From Linguistic to Biometric Detection

When AI can mimic our slang, our typos, and our emojis, the only remaining tells will be biometric and behavioral.

We will look for the "micro-hesitations" in a typing indicator or the way a cursor moves across a screen. The battle for authenticity is moving from what we say to how we exist in the digital space.

The closing gap between human and AI communication is a mirror held up to our own digital evolution. We aren't just building smarter machines; we are building a more efficient, compressed way of speaking to one another. To stay "human" in the eyes of the algorithm, we may eventually have to embrace our most inefficient traits: our messiness, our slow responses, and our beautiful inconsistencies.

FAQs

1. Why is it harder to distinguish humans from LLMs in short-form chat?

Short messages remove complex linguistic signals, leaving only surface fluency—something modern LLMs replicate well.

2. How has the Turing Test changed in short-form messaging?

In short chats, brevity protects AI from making logical errors, shifting detection from depth to speed and tone.

3. What is linguistic convergence in digital communication?

It refers to humans adapting their language patterns to match conversational partners, including AI systems.

4. Can AI intentionally make typos to seem human?

Yes. Modern models can simulate human-like error rates, including typos and casual grammar shifts.

5. Why does short-form chat favor AI performance?

Short exchanges reduce opportunities for contradiction or hallucination, making AI appear consistent and fluent.

6. Are emojis reliable signals of human identity?

No. Emoji usage has become standardized, making it easy for AI to replicate common emotional signals.

7. What behavioral signs may reveal AI in the future?

Response latency patterns, rhythm consistency, and structural symmetry may become stronger detection signals than text itself.